ARC-AGI-3 Humbles Every Frontier AI Model, Scoring Below 1% Where Humans Get 100%

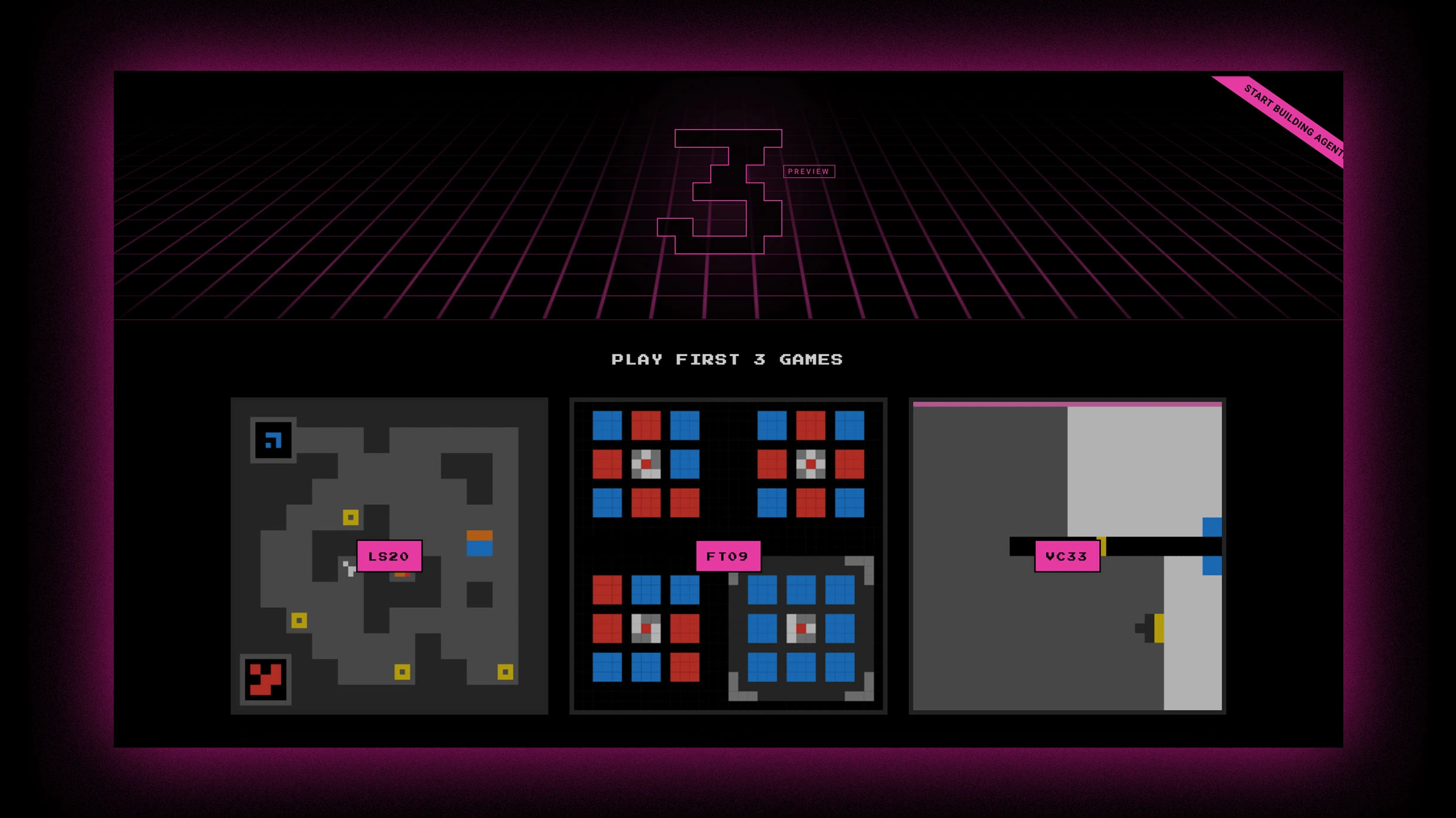

The ARC Prize Foundation launched ARC-AGI-3 on March 25, a new interactive benchmark that ditches static puzzles for turn-based games with no instructions and no stated goals. The results are striking: humans solve 100% of the environments while the best AI (Gemini 3.1 Pro) managed just 0.37%. GPT-5.4, Claude Opus 4.6, and Grok 4.2 all scored near zero. This benchmark measures something fundamentally different from previous tests, specifically the ability to explore, adapt, and learn on the fly, and it reveals a massive gap between pattern-matching at scale and genuine flexible reasoning.